There’s still a growing belief that AI will shape the future in ways humans can’t match. It’s a tempting story, especially for the people who’d quite like to rule that future. But when you work in learning, the picture looks very different.

This week at the AITD neuroscience event I hosted, the room was buzzing with L&D practitioners leaning right into what makes learning genuinely human. Emotion. Connection. Embodiment. Sensemaking. Identity. All the messy, beautiful parts of becoming someone who knows what they’re doing, rather than someone who has simply memorised content.

It struck me how our profession’s shifting deeper into humanity at the exact moment the AI hype machine’s pulling hard in the opposite direction. AI in education keeps getting framed as clean, efficient, objective and inevitable. A shortcut. A time saver. A tidy solution to the struggle of learning.

But I’m still not convinced. I don’t believe AI belongs at the centre of education. And not because I’m anti-tech or some kind of luddite, but because we actually need to care about humans learning in human ways.

Learning takes friction. Growth takes discomfort. You’ve got to break things, rebuild them, rethink them and sometimes fall flat on your face before it all comes together. That’s the whole point. And despite the idea that knowledge on demand is a kind of panacea, we still need people with true mastery. AI sidesteps all that and hands you an answer. It might feel convenient, but it strips out the very experiences that build capability and judgment.

And when you hold that against what we’re being promised about AI, the disconnect becomes obvious.

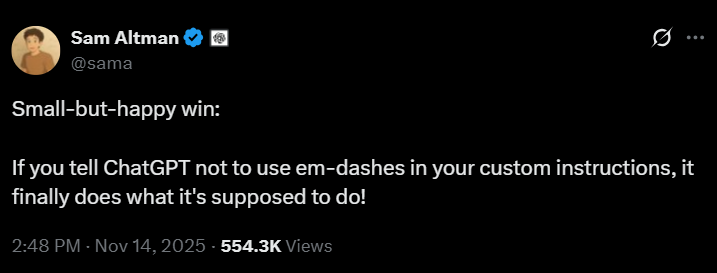

A small moment from this week shows it clearly. Sam Altman posted a lighthearted update celebrating that ChatGPT finally follows a “no em dash” instruction. Funny, yes, but also telling. For all the grand claims about AI reshaping civilisation, a lot of the real work is still tiny corrections and simple preferences. The myth gets bigger and glossier, while the reality stays very ordinary. That’s not a failure. It’s a reminder that AI’s a tool, not the all-knowing oracle the kings and gods like to imagine.

This is why Audrey Watters’ recent piece, AI Grief Observed, hits so hard. She argues that AI isn’t just technology. It’s a cultural project shaped by power, markets and the fantasies of people who want the world to behave predictably. Systems that give neat answers. Interfaces that don’t push back. Workers who trust outputs more than their own experience. Once you see it like this, the hype stops looking accidental. It’s purposeful, and it nudges us into a world built on compliance rather than curiosity, and on tidy answers rather than genuine understanding.

It’s also why Orwell’s idea lands so sharply right now. Totalising systems don’t fear rebellion as much as they fear connection. When people care about each other in ways you can’t measure or control, the system loses its grip. Real connection, belonging and loyalty are too human to domesticate.

This really matters. The new kings want dashboards and the new gods want predictability. Neither has much tolerance for human connection, because connection creates agency, imagination and resistance. That’s why L&D’s doubling down on belonging, community and the neuroscience of relationships. Not because it’s warm and fluffy, but because it’s powerful.

If love’s a rebellion in Orwell’s world, then human connection is a rebellion in ours. It creates pockets of humanity the kings can’t fully influence and the gods can’t standardise and it keeps learning human.

Learning’s never been about downloading answers. It’s about identity, experience, struggle, repair and the shared spaces where people think and grow together. That’s the liminal, transformational magic that universities, apprenticeships and community learning all have. It lives in bodies, minds, relationships, culture, context and meaning, not in code. AI can’t replicate that.

AI can help with admin, it can boost accessibility and support early thinking. That’s all fine. But it shouldn’t design the learning, and it can’t replace the teacher. When we outsource the friction, we outsource the becoming.

The kings will keep selling a future built on automation and efficiency and the gods will keep promising certainty. But those of us who work in the business of human potential know better.

Learning isn’t about answers. Learning’s about becoming. And becoming takes the full stack of human experience. Curiosity. Embodiment. Emotion. Social connection. Failure. Repair. Reflection. Meaning. Identity. Agency.

All the things machines don’t understand.

All the things that make us inconvenient to control.

All the things that make us human.

Human learning’s a journey that can’t be automated. And that alone is a quiet rebellion worth protecting.